Foresight Ventures: Rational Insights into Decentralized Computing Networks

Author: Ian Xu@Foresight Ventures

TL;DR

- The main convergence points of AI + Crypto currently fall into two major categories: Distributed Computing Power and ZKML. For ZKML, you can refer to one of my previous articles. This article will focus on analysis and reflections on the decentralized distributed computing power network.

- Under the trend of the development of large AI models, computing power resources will be the next big battlefield for the coming decade, and it is also the most important thing in the future of human society. It will not only remain in the realm of commercial competition but also become a strategic resource in the game of big countries. Future investments in high-performance computing infrastructure and reserves of computing power will rise exponentially.

- The demand for decentralized distributed computing power networks is greatest in the training of large AI models, but it also faces the greatest challenges and technological bottlenecks, including the need for complex data synchronization and network optimization problems. In addition, data privacy and security are also significant constraints. Although some existing technologies can provide preliminary solutions, they are still not applicable in large-scale distributed training tasks due to the massive computational and communication overheads.

- Decentralized computing power networks have more opportunities to land in model inference, and it can be predicted that the future incremental space is large enough. However, it also faces challenges such as communication delays, data privacy, and model security. Compared with model training, the computation complexity and data interactivity during inference are lower, which is more suitable for execution in a distributed environment.

- Through the case studies of two startups, Together and Gensyn.ai, the overall research direction and specific ideas of decentralized distributed computing power networks are illustrated from the perspectives of technical optimization and incentive layer design.

1. Decentralized Computing Power — Large Model Training

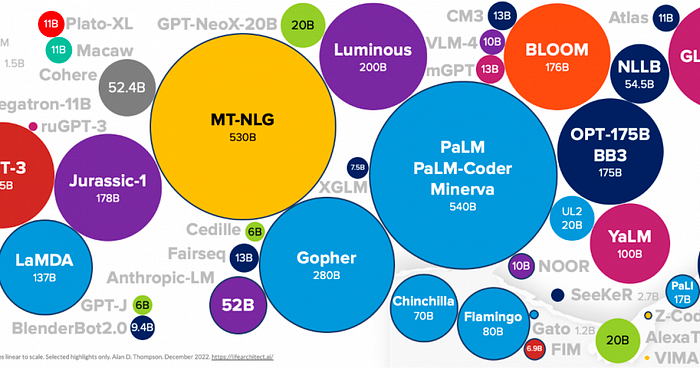

When we discuss the application of distributed computing power during training, we generally focus on training large language models. The primary reason is that the training of small models does not demand significant computing power, and it is not cost-effective to handle data privacy and numerous engineering problems through distribution. Centralized solutions would be more efficient. Large language models, however, require immense computing power, and they are currently in the initial stage of explosive growth. From 2012–2018, the computational requirements of AI approximately doubled every four months. It is currently the focus of computing power demand and is predicted to continue to have enormous incremental demand for the next 5–8 years.

With huge opportunities also come significant challenges. Everyone knows that the field is vast, but where are the specific challenges? Who can target these problems rather than blindly entering the market is the key to identifying excellent projects in this track.

1.1 Overall Training Process

Take the training of a large model with 175 billion parameters as an example. Due to the massive scale of the model, parallel training needs to be carried out on many GPU devices. Suppose there is a centralized server room with 100 GPUs, each with 32GB of memory.

- Data Preparation: First, a large dataset is needed, which includes various data such as Internet information, news, books, etc. These data need to be preprocessed before training, including text cleaning, tokenization, vocabulary building, etc.

- Data Splitting: The processed data is split into multiple batches for parallel processing on multiple GPUs. Assume the batch size chosen is 512, meaning each batch contains 512 text sequences. Then, we divide the entire dataset into multiple batches, forming a batch queue.

- Data Transfer between Devices: At the beginning of each training step, the CPU takes a batch from the batch queue and sends the batch data to the GPU via the PCIe bus. Suppose the average length of each text sequence is 1024 tokens, then the data size of each batch is about 512 * 1024 * 4B = 2MB (assuming each token uses a 4-byte single-precision floating-point number). This data transfer process usually takes only a few milliseconds.

- Parallel Training: After each GPU device receives the data, it begins forward pass and backward pass computations, calculating the gradient of each parameter. Since the model is very large, the memory of a single GPU cannot hold all the parameters, so we use model parallel technology to distribute the model parameters across multiple GPUs.

- Gradient Aggregation and Parameter Update: After the backward pass computation is completed, each GPU obtains the gradients of some parameters. Then, these gradients need to be aggregated among all GPU devices to calculate the global gradient. This requires data transfer through the network. Suppose a 25Gbps network is used, then transferring 700GB of data (assuming each parameter uses a single-precision floating-point number, then 175 billion parameters are about 700GB) takes about 224 seconds. Then, each GPU updates the parameters it stores based on the global gradient.

- Synchronization: After the parameter update, all GPU devices need to synchronize to ensure that they use consistent model parameters for the next step of training. This also requires data transfer through the network.

- Repeat Training Steps: Repeat the above steps until all batches are trained, or a predetermined number of training rounds (epochs) are completed.

This process involves a large amount of data transfer and synchronization, which can become a bottleneck in training efficiency. Therefore, optimizing network bandwidth and latency, as well as using efficient parallel and synchronization strategies, are crucial for large-scale model training.

1.2 Communication Overhead Bottleneck:

It’s worth noting that the bottleneck of communication is also why distributed computing power networks CANNOT currently perform training for large language models.

Nodes need to frequently exchange information to work together, which creates communication overhead. For large language models, due to the enormous number of model parameters, this problem is particularly severe. Communication overhead can be divided into several aspects:

- Data Transfer: During training, nodes need to frequently exchange model parameters and gradient information. This requires the transmission of a large amount of data in the network, consuming a significant amount of network bandwidth. If the network conditions are poor or the distance between computing nodes is large, the delay in data transfer will be high, further increasing the communication overhead.

- Synchronization Issue: Nodes need to work together during training to ensure its correct progress. This requires frequent synchronization operations between nodes, such as updating model parameters, calculating global gradients, etc. These synchronization operations need to transfer a large amount of data in the network and need to wait for all nodes to complete the operation, which leads to a large amount of communication overhead and waiting time.

- Gradient Accumulation and Update: During training, each node needs to calculate its gradient and send it to other nodes for accumulation and update. This requires the transfer of a large amount of gradient data in the network and needs to wait for all nodes to complete the computation and transfer of the gradient, which is another reason for the large communication overhead.

- Data Consistency: It is necessary to ensure that the model parameters of each node remain consistent. This requires frequent data verification and synchronization operations between nodes, which leads to a significant amount of communication overhead.

Although there are some methods to reduce communication overhead, such as parameter and gradient compression, efficient parallel strategies, etc., these methods may introduce additional computational burdens or have negative effects on the training effect of the model. Moreover, these methods cannot completely solve the problem of communication overhead, especially in situations where network conditions are poor or the distance between computing nodes is large.

1.2.1 For instance:

a) Decentralized Distributed Computing Networks

The GPT-3 model contains 175 billion parameters. If we use single-precision floating-point representation (4 bytes per parameter), storing these parameters would require ~700GB of memory. In distributed training, these parameters need to be frequently transmitted and updated between computing nodes.

Assuming we have 100 computing nodes and each node needs to update all parameters at each step, each step would require transmitting about 70TB of data (700GB*100). If we optimistically assume that each step takes 1s, then 70TB of data would need to be transmitted per second. This demand for bandwidth far exceeds the capacity of most networks, and it raises questions of feasibility.

In reality, due to communication latency and network congestion, data transmission time might far exceed 1s. This means that computing nodes might spend most of their time waiting for data transmission rather than performing actual computations. This greatly reduces training efficiency, and this decrease in efficiency isn’t something that can simply be resolved by waiting. It’s the difference between feasibility and infeasibility and can render the entire training process unworkable.

b) Centralized Data Centers

Even within centralized data center environments, training large models still requires intensive communication optimization.

In centralized data center environments, high-performance computing devices form a cluster, sharing computing tasks via a high-speed network. However, even training parameter-intensive models within this high-speed network environment, communication overhead remains a bottleneck because the parameters and gradients of the model need to be frequently transmitted and updated among various computing devices.

As previously mentioned, assume we have 100 computing nodes, each with a network bandwidth of 25Gbps. If each server must update all parameters at each training step, transmitting approximately 700GB of data would take ~224 seconds per training step. Leveraging the advantage of centralized data centers, developers can optimize network topology internally and use technologies like model parallelism to significantly reduce this time.

By contrast, if conducting the same training in a distributed environment, let’s still assume 100 computing nodes, spread globally, with an average network bandwidth of only 1Gbps per node. In this case, transmitting the same 700GB of data would take ~5600 seconds, significantly longer than in a centralized data center. Moreover, due to network latency and congestion, the actual time required might be even longer.

However, compared to a decentralized distributed computing network, optimizing communication overhead in a centralized data center environment is relatively easy. In centralized data centers, computing devices usually connect to the same high-speed network, with relatively good bandwidth and latency. In a distributed computing network, computing nodes might be scattered across the globe, potentially with inferior network conditions, exacerbating the issue of communication overhead.

OpenAI trained GPT-3 using a model parallelism framework called Megatron to solve the problem of communication overhead. Megatron reduces the parameter load and communication overhead for each device by dividing the model’s parameters and processing them in parallel across multiple GPUs. Each device is only responsible for storing and updating a portion of the parameters. At the same time, a high-speed interconnect network is used during training, and network topology is optimized to reduce communication path length.

1.3 Why Decentralized Computing Networks Can’t Achieve These Optimizations

The optimizations could be achieved, but their effectiveness is limited compared to centralized data centers.

- Network Topology Optimization: In a centralized data center, one can directly control network hardware and layout, so network topology can be designed and optimized as needed. However, in a distributed environment, computing nodes are scattered in different geographical locations, say one in China, and one in the U.S., making direct control over their network connections impossible. Though data transmission paths can be optimized via software, it’s less effective than direct hardware network optimization. Besides, due to geographical differences, network latency and bandwidth also vary greatly, further limiting the effect of network topology optimization.

- Model Parallelization: Model parallelization is a technique that splits model parameters across multiple computing nodes, thus improving training speed through parallel processing. This method usually requires frequent data transfer between nodes, hence high network bandwidth and low latency are needed. In centralized data centers where the network bandwidth is high and latency is low, model parallelization can be very effective. However, in distributed environments, due to poor network conditions, model parallelization faces significant constraints.

1.4 Data Security and Privacy Challenges

Almost every step involving data processing and transmission can affect data security and privacy:

- Data Allocation: Training data need to be distributed to various nodes involved in the computation. At this stage, data might be maliciously used or leaked across distributed nodes.

- Model Training: During training, each node uses the data allocated to it to perform calculations and then outputs model parameter updates or gradients. During this process, if the calculation process of a node is compromised or the results are maliciously interpreted, data leakage may occur.

- Parameter and Gradient Aggregation: Outputs from each node need to be aggregated to update the global model. Communication during this aggregation process might also leak information about the training data.

What are the solutions for data privacy issues?

- Secure Multiparty Computation: SMC has been successfully applied in some specific, smaller-scale computational tasks. However, in large-scale distributed training tasks, due to its high computational and communication overhead, it is not yet widely applied.

- Differential Privacy: Used in some data collection and analysis tasks, like Chrome’s user statistics. But in large-scale deep learning tasks, DP can impact the accuracy of the model. Moreover, designing appropriate noise generation and addition mechanisms also poses a challenge.

- Federated Learning: Applied in some edge device model training tasks, like Android keyboard vocabulary prediction. But in larger-scale, distributed training tasks, FL faces issues like high communication overhead and complex coordination.

- Homomorphic Encryption: Already been successfully applied in tasks with smaller computational complexity. However, in large-scale distributed training tasks, due to its high computational overhead, it is not yet widely applied.

Summing up

Each of the above methods has suitable scenarios and limitations. There is no single method that can entirely solve the data privacy issue in large model training in distributed computing networks.

Can the highly-anticipated ZK solve the data privacy problem during large model training?

In theory, Zero-Knowledge Proof can be used to ensure data privacy in distributed computations, allowing a node to prove that it has performed calculations as stipulated without revealing actual input and output data.

However, actually applying ZKP to large-scale distributed computing network training of large models faces the following bottlenecks:

- Increased computation and communication overhead: Constructing and verifying zero-knowledge proofs require substantial computational resources. Moreover, the communication overhead of ZKP is also high, as the proof itself needs to be transmitted. In the case of large model training, these overheads could become particularly significant. For example, if each mini-batch computation requires generating a proof, this would significantly increase the overall training time and cost.

- Complexity of ZK protocols: Designing and implementing a ZKP protocol suitable for large model training would be very complex. This protocol would need to handle large-scale data and complex computations, and it should be able to deal with potential error reporting.

- Hardware and software compatibility: Using ZKP requires specific hardware and software support, which might not be available on all distributed computing devices.

Summary

Applying ZKP to large-scale distributed computing network training of large models would require years of research and development, as well as more focus and resources from the academic community in this direction.

2. Distributed Computing — Model Inference

Another significant scenario for distributed computing is model inference. According to our judgment on the development path of large models, the demand for model training will gradually slow down after reaching a peak as large models mature. However, the need for model inference will exponentially increase with the maturation of large models and Artificial General Intelligence.

In comparison to training tasks, inference tasks generally have lower computational complexity and weaker data interactivity, making them more suitable for a distributed environment.

2.1 Challenges

Communication Latency:

In a distributed environment, communication between nodes is essential. In a decentralized distributed computing network, nodes might be scattered globally, making network latency a problem, especially for inference tasks that require real-time response.

Model Deployment and Update:

Models need to be deployed to each node. If a model is updated, each node needs to update its model, which can consume a large amount of network bandwidth and time.

Data Privacy:

Although inference tasks usually only need input data and the model, without needing to send back a large amount of intermediate data and parameters, the input data might still contain sensitive information, such as a user’s personal information.

Model Security:

In a decentralized network, models need to be deployed to untrusted nodes, which could lead to model leakage, raising issues of model property rights and misuse. This could also trigger security and privacy issues, as a node could infer sensitive information by analyzing the behavior of a model that handles sensitive data.

Quality Control:

Each node in a decentralized distributed computing network might have different computational capabilities and resources, which could make it challenging to ensure the performance and quality of inference tasks.

2.2 Feasibility

Computational Complexity:

During the training phase, models need to iterate repeatedly, and the training process involves calculating forward and backward propagation for each layer, including the computation of activation functions, loss functions, gradients, and weight updates. Thus, the computational complexity of model training is high.

During the inference phase, only a single forward propagation is needed to compute the prediction. For example, in GPT-3, the input text needs to be converted into a vector, which is then propagated forward through each layer of the model (typically Transformer layers), finally producing an output probability distribution used to generate the next word. In Generative Adversarial Networks, the model generates an image based on the input noise vector. These operations only involve forward propagation of the model and do not require computation of gradients or updating of parameters, resulting in lower computational complexity.

Data Interactivity:

During the inference phase, models usually process individual inputs, not large batches of data as in training. Each inference result also depends only on the current input and not on other inputs or outputs, eliminating the need for extensive data interaction and, hence, reducing communication pressure.

Take a generative image model as an example, suppose we use GANs to generate images. We only need to input a noise vector to the model, and the model will generate a corresponding image. In this process, each input generates only one output, and outputs do not depend on each other, eliminating the need for data interaction.

Take GPT-3 as another example; generating each subsequent word only requires the current text input and the model state. There’s no need for interaction with other inputs or outputs, leading to a weaker demand for data interactivity.

Summary

Whether it’s large language models or generative image models, inference tasks have relatively low computational complexity and data interactivity, making them more suitable for decentralized distributed computing networks. This is also the direction that most projects are currently focusing on.

3. Projects currently researching in this direction

The technical threshold and breadth of decentralized distributed computing networks are both high, and they also require hardware resource support, so we have not seen too many attempts. For example, let’s look at Together and Gensyn.ai:

3.1 Together.xyz

Together is a company focusing on open-sourcing large models and dedicated to decentralized AI computing solutions. Their aim is to make AI accessible and usable for anyone, anywhere. Together recently completed a seed round of funding led by Lux Capital, raising 20 million USD.

Together was co-founded by Chris, Percy, and Ce. The initial intention was due to the need for large model training, which requires extensive high-end GPU clusters and significant expenses, with these resources and the capability to train models concentrated in a few large companies.

From my perspective, a reasonable startup planning for distributed computing would be:

Step 1. Open Source Models

To achieve model inference in a decentralized distributed computing network, a prerequisite is that nodes must be able to acquire models at a low cost, which means that models to be used on a decentralized computing network need to be open-source (if the model needs to be used under respective licenses, it will increase the complexity and cost of implementation). For instance, chatgpt, as a non-open-source model, is not suitable for execution on a decentralized computing network.

Therefore, it can be inferred that an invisible barrier for a company providing a decentralized computing network is the need to have a strong capacity for large model development and maintenance. Developing and open-sourcing a powerful base model can to some extent reduce dependence on third-party model open-source, and solve the most basic problem of a decentralized computing network. At the same time, it is also more beneficial to prove that the computing network can effectively carry out the training and inference of large models.

This is what Together has done. The recently released RedPajama, based on LLaMA, is a joint initiative of teams such as Together, Ontocord.ai, ETH DS3Lab, Stanford CRFM, and Hazy Research. Their goal is to develop a series of fully open-source large language models.

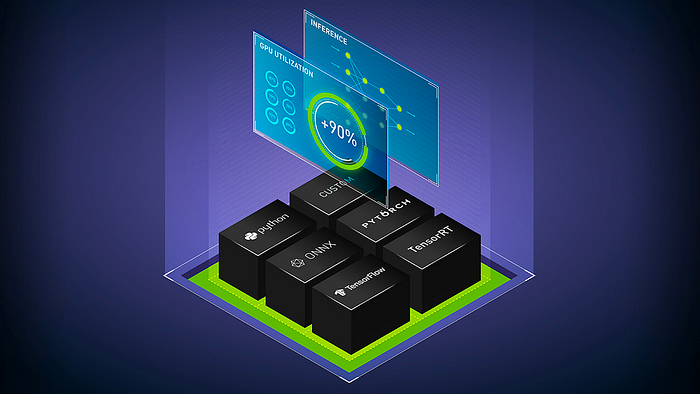

Step 2. Implementation of Distributed Computing in Model Inference

As mentioned in the previous two sections, compared to model training, the computation complexity and data interactivity of model inference is lower, which makes it more suitable to be performed in a decentralized distributed environment.

Based on open-source models, the development team of Together has made a series of updates for the RedPajama-INCITE-3B model, such as implementing low-cost fine-tuning using LoRA, making the model run more smoothly on CPUs (especially on MacBook Pros using the M2 Pro processor). At the same time, although the scale of this model is small, its capabilities exceed other models of the same scale, and it has been applied in real-world scenarios such as law and social interactions.

Step3. Implementation of Distributed Computing in Model Training

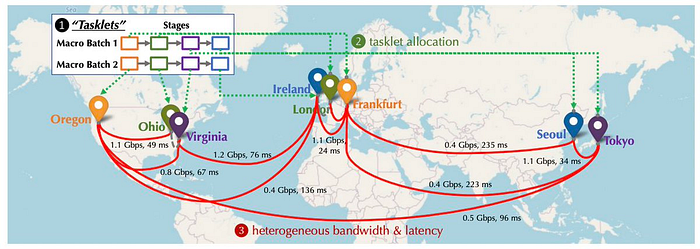

In the medium and long term, despite facing significant challenges and technical bottlenecks, fulfilling the computing needs for the training of large AI models is definitely the most enticing aspect. At the beginning of its establishment, Together began to layout work on how to overcome communication bottlenecks in decentralized training. They also published a related paper at NeurIPS 2022: “Overcoming Communication Bottlenecks for Decentralized Training”. We can primarily summarize the following directions:

Scheduling Optimization

During training in a decentralized environment, since the connections between different nodes have different latencies and bandwidths, it is important to assign tasks that require heavy communication to devices with faster connections. Together has established a model to describe the costs of specific scheduling strategies, better optimizing these strategies to minimize communication costs and maximize training throughput. The Together team also found that even if the network is 100 times slower, the end-to-end training throughput is only 1.7 to 2.3 times slower. Therefore, pursuing the gap between distributed networks and centralized clusters through scheduling optimization is feasible.

Communication Compression Optimization

Together proposed communication compression for forward activation and backward gradients, introducing the AQ-SGD algorithm, which provides strict guarantees for the convergence of stochastic gradient descent. AQ-SGD can fine-tune large base models on slow networks (e.g., 500 Mbps), and compared with the end-to-end training performance in the centralized computing network (e.g., 10 Gbps) under uncompressed conditions, it is only 31% slower. In addition, AQ-SGD can be combined with the most advanced gradient compression techniques (such as QuantizedAdam) to achieve a 10% improvement in end-to-end speed.

Project Summary

The Together team has a comprehensive configuration, and all members have strong academic backgrounds, with industry experts supporting everything from large model development, and cloud computing to hardware optimization. And Together has indeed shown a patient, long-term posture in its path planning, from developing open-source large models to testing idle computing power (like Mac) in distributed computing networks for model inference to the layout of distributed computing power in large model training. It gives a feeling of a big payoff after extensive preparation :)

However, I have not seen many research achievements from Together in the incentive layer. I believe this is as important as technical research and development and is a key factor to ensure the development of the decentralized computing network.

3.2 Gensyn.ai

From Together’s technical path, we can roughly understand the process of decentralized computing networks landing on model training and inference, as well as the corresponding R&D focus.

Another crucial aspect that cannot be overlooked is the design of the incentive layer/consensus algorithm for the computing network. For example, an excellent network needs to:

- Ensure that the benefits are attractive enough;

- Ensure that each miner gets the benefits they deserve, including anti-cheating and rewarding more work;

- Ensure tasks are reasonably scheduled and distributed among different nodes, avoiding a large number of idle nodes or overcrowding of some nodes;

- Ensure the incentive algorithm is simple and efficient, without causing excessive system burden and delay.

……

Let’s see how Gensyn.ai does it:

- Becoming a node

Firstly, in the computing network, solvers compete for the right to handle tasks submitted by users through bidding. Based on the size of the task and the risk of being caught cheating, solvers need to pledge a certain amount.

- Verification

While updating parameters, the solver generates multiple checkpoints (to ensure the transparency and traceability of the work) and regularly generates cryptographic encrypted inference proofs about the task (proof of work progress).

When the solver completes work and produces some calculation results, the protocol will select a verifier. The verifier also pledges a certain amount (to ensure the verifier honestly performs verification) and decides which part of the computation results needs to be verified based on the provided proofs.

- If there is a disagreement between the solver and the verifier

A data structure based on Merkle trees is used to pinpoint the exact location of disagreement in the computation results. The entire verification operation is on-chain, and cheaters will be deducted the pledged amount.

Project Summary

The design of the incentive and verification algorithms allows Gensyn.ai do not have to replay all results of the entire computation task during the verification process. Instead, it only needs to replicate and verify part of the results based on the provided proofs, which greatly improves the efficiency of verification. At the same time, nodes only need to store part of the computation results, which also reduces the consumption of storage space and computational resources. Additionally, potential cheating nodes cannot predict which parts will be selected for verification, thus reducing the risk of cheating.

This way of verifying disagreements and discovering cheaters can quickly find errors in the computation process without needing to compare the entire computation result (starting from the root node of the Merkle tree, gradually traversing downwards). This is very effective when dealing with large-scale computation tasks.

In summary, Gensyn.ai’s incentive/verification layer design aims for simplicity and efficiency. But currently, this is only at the theoretical level. The specific implementation may still face the following challenges:

- In terms of the economic model, how to set appropriate parameters to effectively prevent fraud without imposing too high a threshold on participants.

- In terms of technical implementation, how to devise an effective periodic cryptographic inference proof is a complex issue requiring advanced cryptographic knowledge.

- On task distribution, how the computing network selects and assigns tasks to different solvers also requires the support of reasonable scheduling algorithms. Simply assigning tasks according to the bidding mechanism seems questionable in terms of efficiency and feasibility. For instance, nodes with strong computing power can handle larger tasks but may not participate in bidding (this involves the incentive issue of node availability), and nodes with low computing power may bid the highest but are not suitable for handling complex large-scale computation tasks.

4. A Bit of Reflection on the Future

The question of who needs a decentralized computational power network has never really been validated. The application of idle computing power in the training of large models, which have a huge demand for computing resources, seems to make the most sense and offers the largest room for imagination. However, constraints like communication and privacy force us to rethink:

Can we truly see hope in the decentralized training of large models?

If we step outside this generally agreed “most logical scenario”, would it not be a large market to apply decentralized computing power to the training of small AI models? From a technical perspective, the current constraints have been solved due to the scale and architecture of the model. Moreover, from the market perspective, we have always thought that the training of large models will be a huge market from now into the future, but does the market for small AI models do not have its allure?

I don’t think so.

Compared to large models, small AI models are easier to deploy and manage, and they are more efficient in terms of processing speed and memory usage. In many application scenarios, users or companies do not need the more universal reasoning capability of large language models but are only focused on a very refined prediction target. Therefore, in most scenarios, small AI models are still a more feasible choice, and should not be overlooked too early in the tide of FOMO on large models.

Reference

https://www.together.xyz/blog/redpajama

https://docs.gensyn.ai/litepaper/

https://www.nvidia.com/en-in/deep-learning-ai/solutions/large-language-models/

https://indiaai.gov.in/article/training-data-used-to-train-llm-models

About Foresight Ventures

Foresight Ventures is dedicated to backing the disruptive innovation of blockchain for the next few decades. We manage multiple funds: a VC fund, an actively-managed secondary fund, a multi-strategy FOF, and a private market secondary fund, with AUM exceeding $400 million. Foresight Ventures adheres to the belief of a “Unique, Independent, Aggressive, Long-Term mindset” and provides extensive support for portfolio companies within a growing ecosystem. Our team is composed of veterans from top financial and technology companies like Sequoia Capital, CICC, Google, Bitmain, and many others.

Website: https://www.foresightventures.com/

Twitter: https://twitter.com/ForesightVen

Medium: https://foresightventures.medium.com

Substack: https://foresightventures.substack.com

Discord: https://discord.com/invite/maEG3hRdE3

Linktree: https://linktr.ee/foresightventures

Disclaimer: All articles by Foresight Ventures are not intended to be investment advice. Individuals should assess their own risk tolerance and make investment decisions prudently.